Overview

In this project, I drew inspiration from the renowned AlexNet and LeNet architectures and developed a compact Convolutional Neural Network (CNN) specifically designed for the CIFAR-10 dataset using PyTorch. To enhance the performance of my model, I experimented with different optimizers and employed advanced image preprocessing techniques to enhance the quality of the input data.

You can find my code here.

Project Instructions

- You are not allowed to install or use packages not included by default in the Colab Environment.

- You are not allowed to use any pre-defined architectures or feature extractors in your network.

- You are not allowed to use any pretrained weights, ie no transfer learning.

- You cannot train on the test data.

Model Architecture

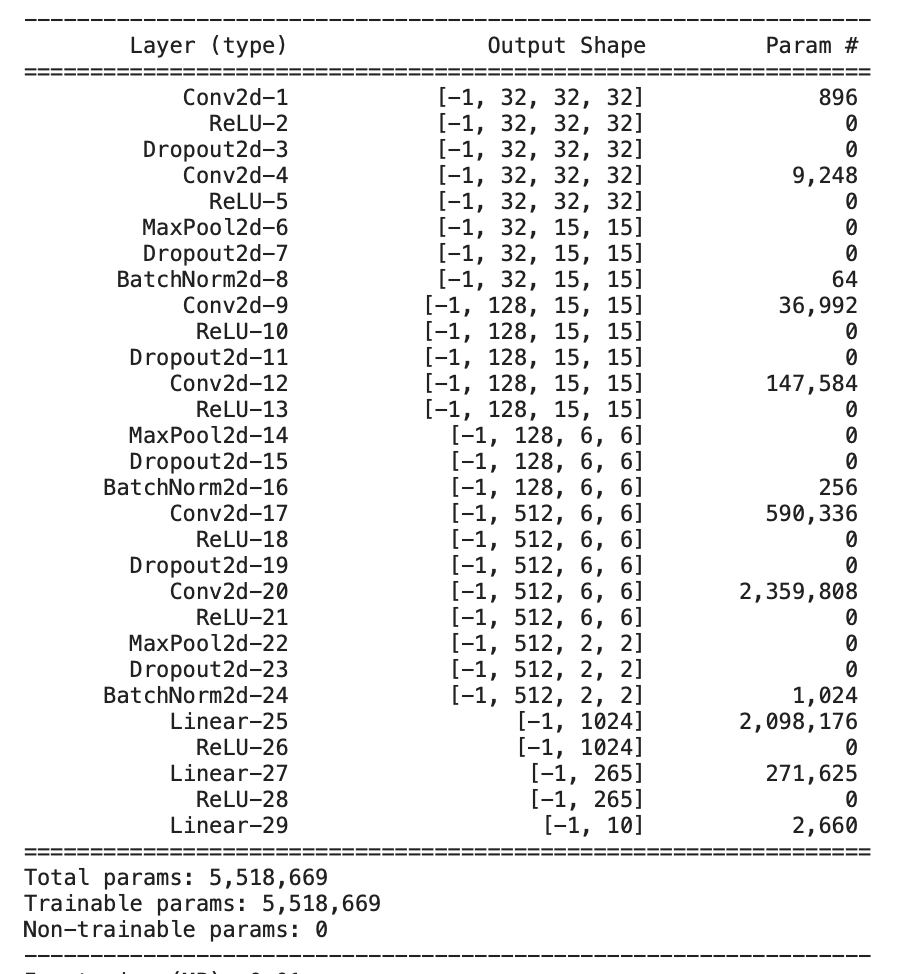

In my approach, I constructed a 6-layer deep convolutional neural network (CNN) and integrated dropout layers with a Bernoulli parameter set to 0.2 after each convolutional layer. This helped me reduce the correlation between adjacent pixels, effectively preventing overfitting and improving generalization. Then, I passed it through the batch normalization function for alternate channels to reduce internal correlation and stabilize the network during training.

Influenced by the architecture of AlexNet, I progressively increased the out-channel size by a factor of 4 as we moved deeper into the convolutional layers. This strategy allowed the network to capture more complex features and gain a deeper understanding of the dataset. To downsample the feature maps and reduce the dimensions, I incorporated max pooling after every two layers. This process reduced the spatial resolution by a factor of 2 while retaining the most salient features.

After the convolution phase, there are 3 dense linear layers. For activation, I utilized the rectified linear unit (ReLU) activation function with no activation for the last layer. Finally, I chose Adam as my optimization model with a learning rate of 0.001. By incorporating these design choices and leveraging techniques inspired by AlexNet, I aimed to create a robust and well-performing CNN model for the task at hand.

Improvements

-

Initially, I added the softmax function to the last layer of my neutral network. After long and painful journey of debugging and reading PyTorch documentation, I realised that

nn.CrossEntropyLoss()already does the softmax for you in the loss computation! As there's really no need to one hot encode, I dropped the final softmax activation function which improved my results. -

I used

transforms.RandomHorizontalFlip()andtransforms.RandomCrop()for preprocessing for CIFAR-10 to introduce data augumentation. - I increased the batch size to 128 to make the training faster.

Test Accurancy

.png)

.png)